This is another blog entry in regard to ZFS and about what you have to keep in mind about it when working with ZFS. It’s nothing really astonishing, you just don’t think about it quite often. However I still think, for most of you I’m not telling anything new. In the end it’s just part of my braindump project.

Clever storage

There are customers using storage or SAN components that are doing some kind of storage tiering on a granularity smaller than a LUN.

The idea is to have frequently accessed blocks on fast storage like SSD and less frequently accessed blocks on slow storage like rotating rust. This is a good concept. On any given LUN not all data is equally important. There may be data that is accessed very often, but there will be data that is accessed every few weeks as well. While you want the first on very fast storage, it doesn’t matter much for the second kind of data. So separating the data can really help to drive down the need for very fast storage and for many loads this idea works very well. I’ve used it with a lot of success.

The decision what is frequently accessed is essentially done by counting how often you access an area of the LUN. The specifics of an implementation may vary as well as the size which these areas have, but in the end it practically always boils down to this.

However the challenge starts when one layer tries to outsmart another layer in regard to placing data without having the proper information. And in this case the COWness of ZFS and the tiering are trying to outsmart each other.

The challenge with ZFS pools

Well, shortly said: Those tiering mechanisms may not yield the expected results as a core fundamental of copy-on-write filesystems may interfere with one of the core fundamentals of block-access counting auto tiering. And ZFS is a copy-on-write filesystem. The reason is quite simple and I will explain it for ZFS.

As I already wrote on a multitude of occasions, ZFS never overwrites data persisted to disk in place, it always writes the changed data at a new place. It’s the central axiom of ZFS and a lot in ZFS is essentially based on this axiom.

And this is the issue: A block read or a block write has no further meaning for a storage. It’s just blocks from the perspective of the storage if you use iSCSI or FC. It just gets commands to read or write to a block (well, it’s a bit more complex, but in the end it boils down to this for the purpose of this example).

Let’s assume you write something to disk. ZFS chooses block 4711 for this. The data is evicted from the cache after a while. Then some application wants to access the data. It reads from disk — let’s say 5 times over the next day. Let’s assume you consider data that is accessed 5 times in a timespan of 24 hours as important enough to warrant migration to SSD, so you get over the threshold of “this is important data”. The storage migrates it to SSD. You read it again. You get it now from SSD. It’s fast now. Up to now, no issue.

However now you change it. For example it’s a block of a database. ZFS doesn’t write it at the same location, it writes it to block 815. The storage doesn’t know that it’s the hot data from block 4711. There is no connection between the data in block 4711 and the write to 815. ZFS isn’t telling the storage because it can’t. They are just independent SCSI commands requesting or writing some data.

If you use the rotating rust storage as the default storage tier, your write will go to rotating rust and not to SSD and the process to deem it as important to get it to SSD starts again.

Of course you could default to SSD for writing, however then you need enough SSD storage to cope with the write load until the tiering process has done its job. Interestingly it will perhaps migrate the most used blocks to rotating rust at first because of caching together with blocks used rather seldom. Why will be obvious later.

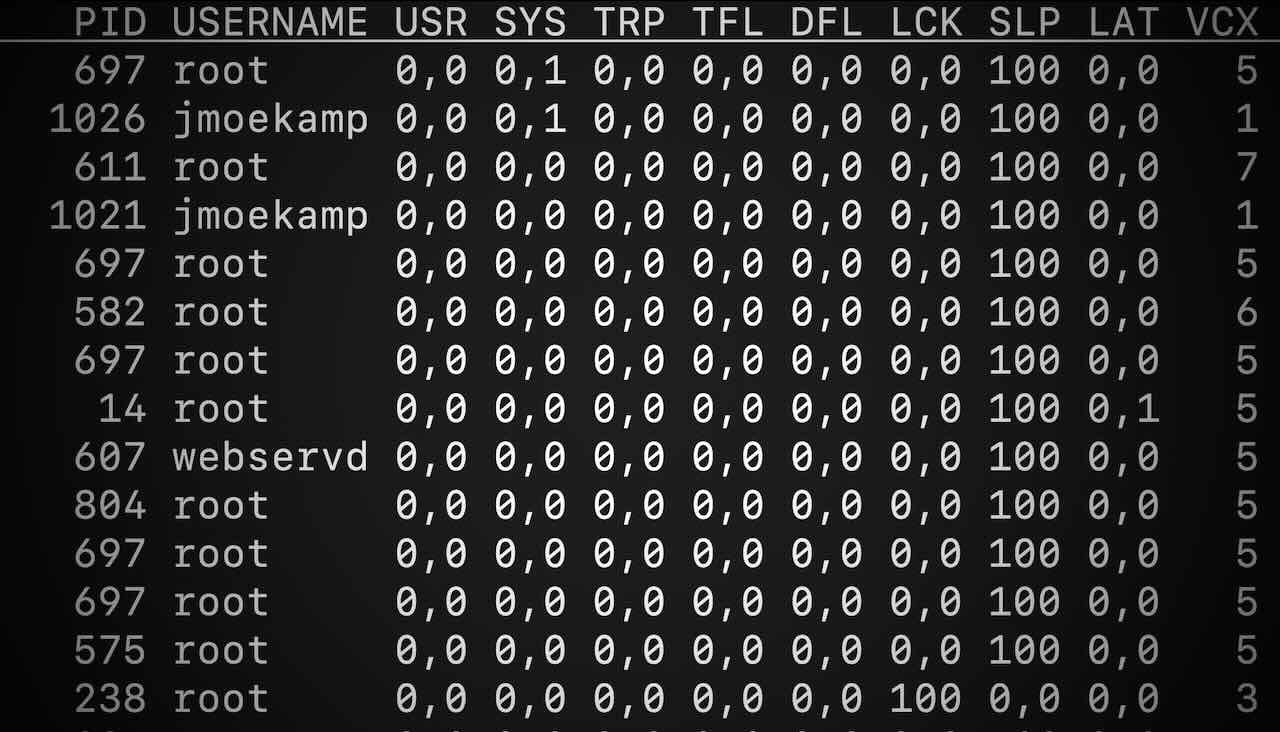

The “never change data in place” paradigm creates a second issue. The point is: Usually you don’t want to access disks. Disks are always slower than RAM, so you cache as aggressively as possible. So really frequently used data is probably in the ARC, and read accesses for it won’t hit the storage at all. So there is nothing to count. It may look like the blocks are not really active.

Those reads from the cache don’t count to the accesses because the storage never sees them in order to count them. You want this behaviour, but in this case it’s not helpful in order to bring this concept of counting to work. The “never at the same place” is then a factor here as well making it more problematic.

The challenge with “never change in place” is that you can’t use the writes to this very block as a proxy indicator for the importance of a block as well by assuming that an often written block is an important block because the data is always written to a new block. For an extremely important piece of data from the perspective of the server you will see one write to and one read from the storage for every reinitialisation of the ARC (for example at boot time) when you look at it from a block perspective. One write to persist it and one read to get it in the ARC and you hope that it will stay there the whole time, because as I said, you don’t want storage accesses, because they are always slower than RAM.

You won’t see a second write or a third one to the same block from the perspective of the storage, because the data is never overwritten (I have an idea how you could have COW without having this and related issues, but I won’t talk about it here) as when the data of this block is written to disk, ZFS will write it to a totally different block. But by this measure a block would never be important enough to warrant migration to SSD as well.

Not without advantages

Of course under certain circumstances the automatic tiering will work with a ZFS filesystem and do its job. A block has to fulfil two conditions:

- it isn’t changed for a longer time.

- it has frequent accesses that really hit the storage and not only the caches.

Thus for a server for example providing scanned files, which are used at first quite often and then more and more seldom, but never changed, it will work quite fine, for archival storage perhaps too, but you would put that on rotating rust drives or in the Oracle Cloud anyway.

Not a problem at all

However in the end you can think from a different perspective about this and so this isn’t an issue at all, as ZFS has some interesting features.

What do you want from tiering and a migration to SSD? You want to be able to persist to disk with ultra low latency and you want to keep the most important stuff on faster storage? Why not do this for all the data?

ZFS has a similar mechanism to storage tiering built in, which is custom built for ZFS. It’s called Hybrid Storage Pools (HSP).

ZFS can use different disks for special loads:

- separated ZIL aka write acceleration SSD aka Writezillas/Logzillas

- L2ARC aka read acceleration SSD aka Readzillas

- Metadevices primarily used to accelerate deduplication access (I wonder why nobody uses the name Metazillas for this)

Hybrid storage pools can accelerate writes by directing the writes to the ZIL to an SSD separate from the pool disks. So you can get the advantages of the low latency of SSD at writing even when your main pool is rotating rust disks. Hybrid storage pools can implement an SSD based second level cache, so data that isn’t used frequently or recently enough to stay in the caches can be fetched from faster SSD. And metadevices can solve the problem that dedup tables can be larger than the memory which can be detrimental to performance.

It’s not the classical tiering, but it uses different tiers of storage to accelerate access for the accesses that matter most to enable you to use rotating rust for the largest part of data. It doesn’t move data (again, the central ZFS axiom). Even when an L2ARC fails it doesn’t matter. Technically it’s not persisted data there but evicted cache, the persisted data is on the pool disks.

So it’s much better for ZFS to configure your central storage to provide an SSD based LUN for write acceleration without any tiering enabled, rotating rust disks for the pool disks without any tiering enabled and to have some ultrafast SSD locally in your server for read acceleration so it doesn’t even load your SAN and you have direct access via PCIe without any need to go over any fabric (of course you could put your read accelerating SSD in the central SAN as well but that would mean an additional fabric hop).

So you can still yield the advantages of auto tiering for all the other loads on your storage, I just would not use it with ZFS or to be exact: I would use it only after very careful consideration. In the end you can always say “Nah, for these LUNs used by ZFS I don’t want tiering” and configure it like I described at the start as an HSP. For other filesystems and loads it will potentially work much better and you can use it.

Informed decisions

The point is that making better decisions is making informed decisions. When you let ZFS do this work it actually knows a lot about what’s going on with its filesystem, it knows for example if it was a synchronous or an asynchronous write from the perspective of the syscall, it knows when to write a block to write accelerating SSD or directly to rotating rust, even when you use the same LUNs per pool because you told it by the logbias property. It knows what blocks are the most lukewarm ones no matter where they are written on disks, because the L2ARC fetches the soon to be evicted parts of the ARC cache and doesn’t migrate or copy data from the pool disks to the read acceleration SSD based on a counter that may trigger or may not trigger. The SSD for write acceleration can be potentially much smaller. I often calculate with 5 times the volume your ZFS writes in a single transaction group commit which is usually a few seconds. And that’s most often a rather small area.

This “informed decisions are better decisions” is by the way the reason why Oracle implemented the Oracle Intelligent Storage Protocol for the ZFS Storage Appliance. The database informs the storage. When using it with the Oracle Database the database is telling the storage what a block actually is so it can for example not put it in the caches, do a write based on “logbias=latency” or “logbias=throughput” based on this data. This however works with NFS in the form of NFS commands directly generated by the database (hence dNFS) and not with FC. And don’t get me started on Exadata, the tight integration of storage server and database server is one of the main points.

And in the end you can do this in the application as well: You could use partitioning or ADO with the Oracle Database which definitely knows more about the data than the operating system or the storage as long as you haven’t protocols in place like OISP (and there the database tells the storage what it knows more about the load it is exerting on the storage). Or a mailserver allows you to put the mail and the indexes and control files in different directories, one on rotating rust, one on SSD, so the storage doesn’t have to deduce this from the access pattern. So you can still have ZFS and use your storage tiers optimally.

Conclusion

So: ZFS may not work well with such tiering mechanisms based on observing the accesses to blocks, but on the positive side, ZFS has the functionality to implement something very effective to use different storage tiers.